Performance analysis and tuning of large scale systems is a highly technical, skill based and methodical process. It takes a lot of time to gain the skill, knowledge and experience required to achieve a good outcome.

To help with this there are many software products designed to get to the core of a problem rapidly.

To help with this there are many software products designed to get to the core of a problem rapidly.

These software systems do not replace the need for a highly skilled specialist, despite what the marketing material might say.

MetricsAnalysis recognised this and developed a service - MetricsAnalysis for IBM Db2 (MA4DB2).

Specialist Db2 knowledge is not required on the client side.

MA4DB2 is a low impact, low cost, high value service built to use anonymised data. This is important if you are using an off-site service. Can it operate on anonymised data and still deliver?

This blog presents the results of a proof of concept MetricsAnalysis did for jSolutions, who source data from the web for analytics.

Background

jSolutions has a process that downloads XML documents from an internet feed and stores them as native XML in a Db2 database, for use in predictive analytics. The end-to-end process is run 4 times a day and takes approximately 3 hours per run.

Can the process time be reduced to enable more collections and lead to more accurate predictions?

Methodology

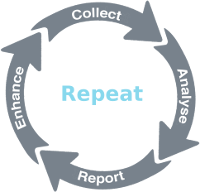

MetricsAnalysis uses a repeatable cycle of events to drive both cost reduction and enable risk management.

The Collect stage extracts 23 sets of Db2 metrics and configuration information

- This data is provided to MetricsAnalysis and processed through a Data Warehouse and Data Mart

- Using analytical methods to analyse the data, a report is produced that contains recommendations to address issues found in the analytic phase

- Implementation details are provided to enhance the analysed system

The cycle is repeated 2-3 times.

jSolutions Proof of Concept

3 cycles of analysis were used to effect change on the jSolutions Db2 implementation. These changes were in 4 key areas

- Registry Variables

- Db2 Instance Configuration

- Db2 Database Configuration

- Db2 Database Objects

Indexes are generally considered a means to improve performance. This was illustrated by jSolutions when they reported a reduction in runtime to 30 minutes by creating indexes. As part of the proof of concept, these were removed – to be added after the POC was complete. With this in mind, it must be noted that no indexes were created during the POC process.

Below is presented the results and the effects of the recommendations.

Workload Analysis

Firstly, the workloads are analysed to determine if any change can be explained through the workload processing. Is there more, or less, throughput that can be used to explain any differences in resource consumption?

In the comparison between the workloads the following can be observed

- a 5.96% increase in SELECT statements

- a 6.11% increase in commits

- an increase of 7.2% in the number of transactions

Although there is a 56.88% increase in Update/Insert/Delete statements, a 50% increase in failed statements a 27.62% increase in rollbacks and a 21.86% increase in call statements, for the workload this constitutes a tiny overall percentage as can be seen below, in the distribution of processing within the workloads

The workload distribution shows that at 59.26 % the majority of the workload is SELECT statements followed at 38.19% by COMMIT statements.

Have the changes had a positive outcome for CPU consumption? This is important, as a reduction in CPU can have a direct impact on costs related to CPU usage. For example licensing costs and/or hosting costs.

Is there an impact on IO processing? A reduction in IO may lead to a reduced need for faster disc, which means that less expensive disc can be used, further reducing costs.

Below we look at the impact of the changes on CPU and IO.

CPU

The CPU comparison uses metrics taken at the database level. This is important as at this level the CPU records a number of other elements consuming CPU including backup and utility processing.

The CPU comparison uses metrics taken at the database level. This is important as at this level the CPU records a number of other elements consuming CPU including backup and utility processing.

Ideally a comparison of the CPU consumption by SQL statement would be used but the configuration at the start did not permit the data to be collected.

We average the total CPU consumption to a SQL statement and transaction level to get a picture of the change in consumption.

The total CPU time has reduced by 69.87%; the average CPU per SQL statement has reduced 71.73%; the average CPU per transaction has reduced 71.9%.

IO

When looking at IO, we look at the physical reads and writes, and the times for these. This will give an indication of the effect of the changes on IO processing.

There is a 14.9% reduction in physical write time and a 97.02% reduction in physical read time.

These are a result of the reduction of 87.03% in physical writes and a reduction of 86.82% in physical reads.

Observations

MetricsAnalysis for IBM Db2 uses analytical methods on the metrics to determine the style of workload, which determines the configuration and design options that will best suit the workload. These are presented, along with the analysis with recommendations and implementation instructions.

The workload presented by jSolutions manifested in the processing style of decision support (DSS) and data warehouse (DW). These tend to have high scans, heavy sort and temporary data usage, although there was no indication of heavy sort for the presented workload.

DSS and DW processing require separate performance tuning considerations to a traditional operational application. It is critical to understand the application processing style and tune accordingly.

Elements of the analysis are discussed below.

Key changes

Given the substantial resource savings, were there any key areas presenting as the problem?

The answer is that MetricsAnalysis for IBM Db2 does a multi-dimensional analysis to identify processing and configuration conflicts - every time.

These conflicts are addressed in the client report depending on the impact the conflict contributes. Due to the way in which change can affect the workload it is sometimes prudent to implement change and see its impact before addressing other issues.

The number and type of changes were as follows

- Registry – 1

- Database Manager Configuration – 1

- Database Configuration – 6

- Database Objects

- Bufferpool – 5

- Tablespace – 34 (16 changed and 18 new)

So much gain from such little change, and all achieved in 3 cycles.

- Collect → Analyse → Report → Enhance

- Collect → Analyse → Report → Enhance

- Collect → Analyse → Report → Evaluate

The third cycle did not incur any further change.

Findings

It is clear that the changes made have had a substantial effect on resource consumption. This is reflected in the run time for the application, reduced from approximately 3 hours to 15 minutes – a 91.7% reduction in processing time.

A key finding of the analysis is that Db2 does not work well in a DSS/DW environment with autonomics enabled for all memory areas (Self Tuning Memory Manager). In particular, the bufferpool, package cache and sort areas were poorly managed.

Tuning of the bufferpool, IO and sort areas had the greatest impact.

Corollary

The last question to be addressed is 'what about the indexes'? When they were added back, what impact was there?

The answer is very striking. There was no real perceived change in the time taken to run the processing.

However, there was a 46% INCREASE in CPU. Db2 was processing the workload in a different manner, however not quite to the benefit in resource consumption that would be expected.

Conclusion

As stated at the start, performance analysis and tuning of large scale systems is a highly technical, skill based, and methodical process.

Traditional methods of database tuning, for example creating indexes, although providing a perceived benefit, may in fact be masking bigger savings. MetricsAnalysis for IBM DB2 implements multi-dimensional analytical methods for identifying alternatives for optimising resource consumption.

What applications are there for such an analysis?

- Virtualisation – if you are considering moving to a virtualised Db2 environment, it would be prudent to ensure that Db2 and its use are aligned for optimal resource consumption. This will help keep costs down when the decision is made to go virtual.

- Cloud based Db2 – moving to the cloud. It's the in-thing, are you considering making the move? If you are then consider optimising your Db2 configuration before making the move. When moving your Db2 processing to the cloud, ensure that you start with the same optimised configuration. This will help keep your Db2 Cloud ongoing costs down.

- DevOps – in a DevOps environment, things are moving fast to deliver benefit for the Business. With MetricsAnalysis for IBM Db2, that doesn't mean having to compromise on the quality of the delivered product. Using our Workload Compare it is possible to determine the impact of change and to identify where in the system the change has impacted most. The decision to deploy can now be made based on a risk management strategy – a small increase in CPU can be absorbed in production. GO!; a large increase in IO will slow the system down too much. STOP!

What journey are you on? Have a Db2 specialist available at every step for a fraction of the cost– MetricsAnalysis for IBM Db2.

Terms & Conditions

Report